KV Cache transfer - Gemma 4 models

This is part 2 of Cross-quant KV-cache transfer focusing on the Gemma 4 model family.

As before, I used llama.cpp [1] and Unsloth [2] quantized models.

The process is the same as in part 1:

- ref - BF16 processes the prompt and generates 2048 tokens for ~20 prompts. Logits are saved as ground truth.

- target - quantized model replays the same token sequence. This is the “just use lower quant” baseline.

- handoff - BF16 processes the prompt, its KV cache is saved and loaded into the quantized model, which then replays the generation tokens.

We compare using KL divergence from the ref logits - lower means closer to BF16. If handoff KLD is lower than target KLD, the higher-quality KV cache is helping.

One difference from the previous study is the use of BF16 as reference instead of Q8.

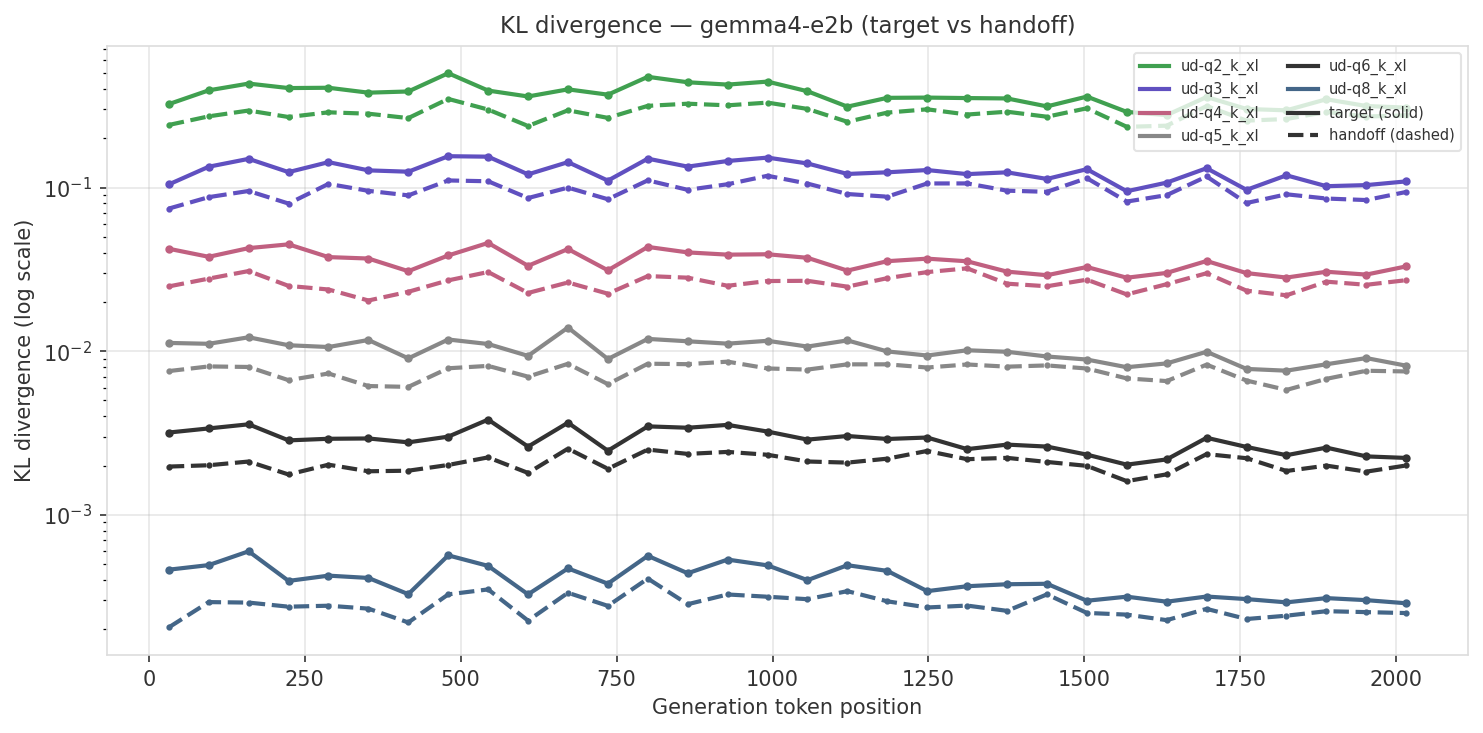

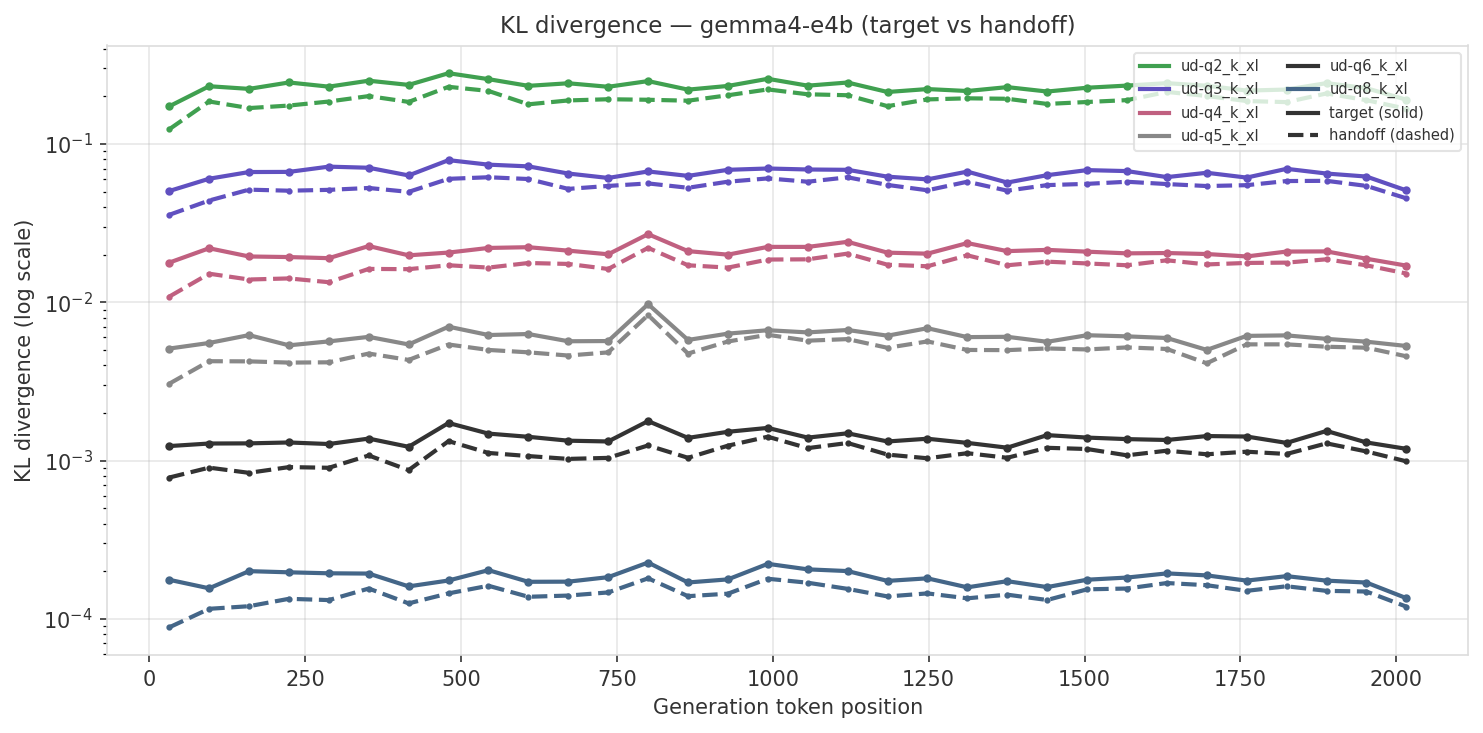

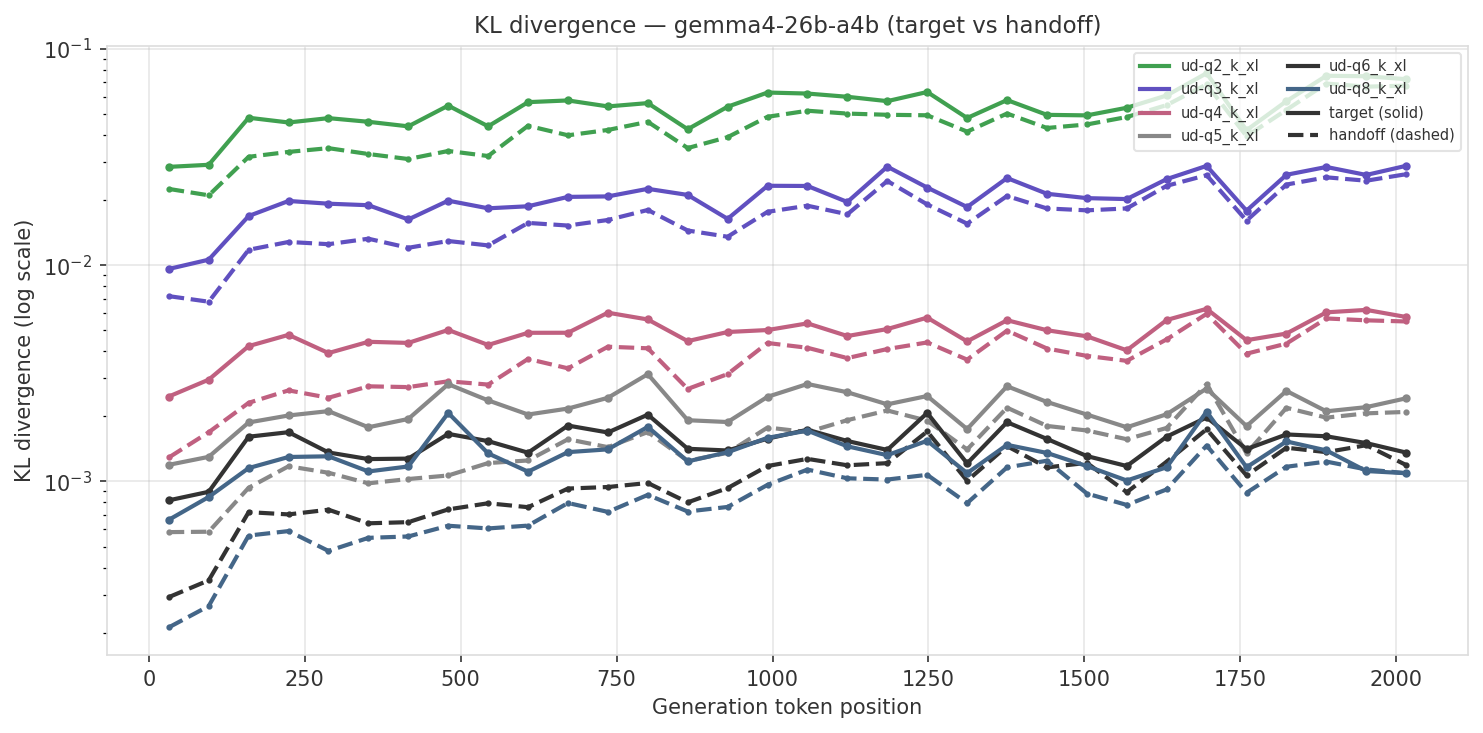

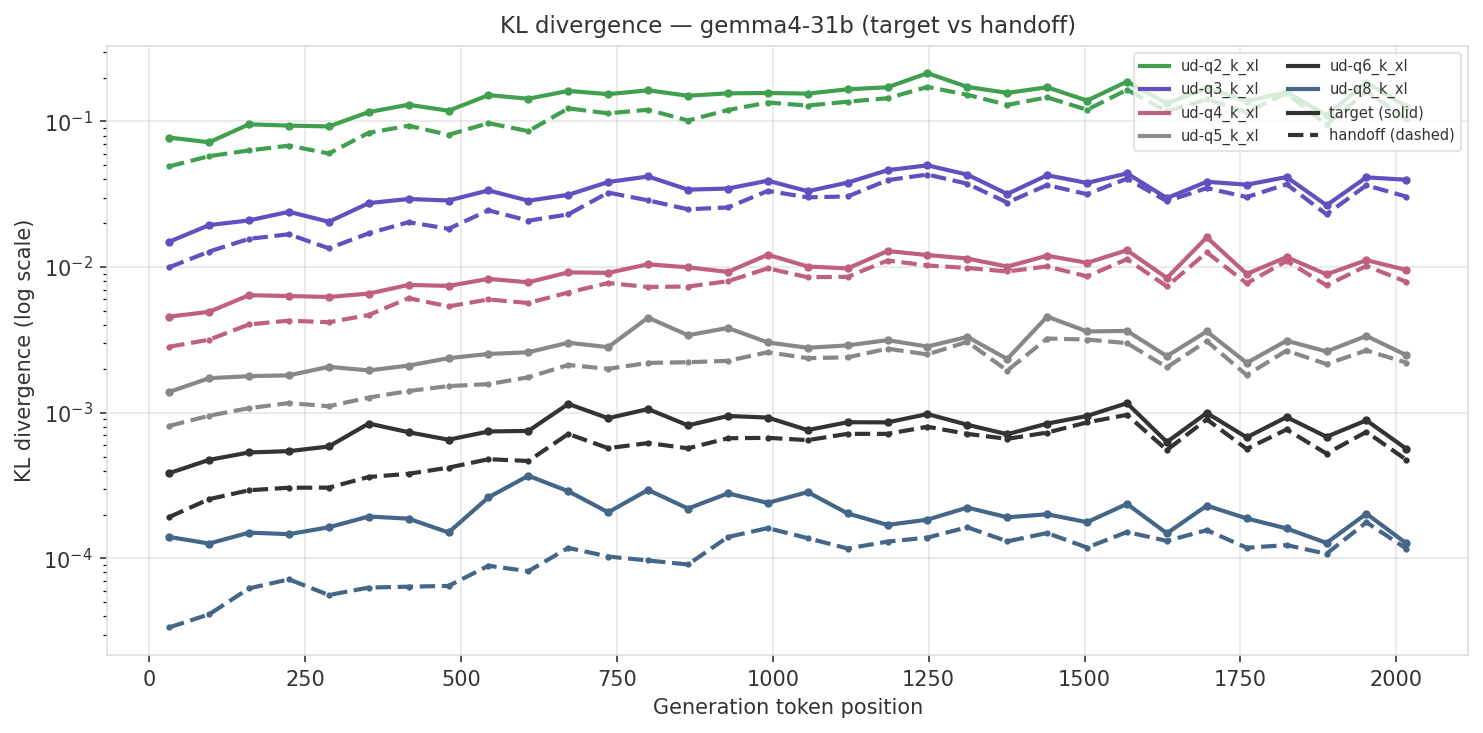

The charts below show absolute KL divergence (log scale) across generation position. Solid lines are target (no transfer), dashed lines are handoff (with KV cache transfer). Each color is a different quant level.

For Gemma models we see the same patterns - the effect decays as more tokens are generated by the quantized model. Interestingly, similar to Qwen 3.5, the small MoE (26B-A4B) shows the biggest impact - Q5 and Q6 with handoff shows lower KLD than Q8 without.

Gemma 4 E2B

Gemma 4 E4B

Gemma 4 26B-A4B (MoE)

Gemma 4 31B

References

- llama.cpp - C/C++ LLM inference library

- Unsloth Gemma 4 collection - quantized GGUF models

- Cross-quant KV-cache transfer - part 1 of this study (Qwen 3.5 + Gemma 4-31B with Q8 reference)

- kv-transfer - experiment code