Cross-quant KV-cache transfer

LLM prompt processing (or ‘prefill’) and token generation (or ‘decode’) have very different compute profiles. Prompt processing loads model weights only a few times - we can process long token sequences in large batches. During token generation the model must be loaded for each generated token, and thus has to fit into fast memory.

Let’s take Gemma 4 31B as a concrete example. Its Q8 quantization is 33 GB - too large for a single GPU like the NVIDIA 5090 (32 GB VRAM). But Q5 at 20 GB fits comfortably. Both share the same architecture, just different weight precision.

We could use Q8 for prefill only - load it in chunks, process the prompt batch-by-batch, and discard it. Generation continues with Q5. This is practical because prompt processing only needs to load the weights a few times (large batches), while generation loads them once per token. Similar approach can be applied to disaggregated prefill/decode setups.

The information from prefill is transferred via the KV cache. It is stored at the same precision (F16) regardless of weight quantization - what changes is the quality of the model that produced it.

This experiment tests the first step: does a KV cache produced by Q8 actually help more aggressively quantized model generate better output? The chunked-loading scenario is not in scope. We process the prompt with Q8, save the KV cache, hand it off to Q5 (or Q2, Q3, etc.), and measure how much closer the output stays to the Q8 reference.

Setup

For each prompt we run three configurations and collect logits at every token position:

- ref - Q8 processes the prompt and generates tokens. This is the ground truth.

- target - Q2 replays the same token sequence. This is the “just use Q2” baseline.

- handoff - Q8 processes the prompt, its KV cache is saved and loaded into Q2, which then replays the generation tokens.

We compare using KL divergence from the ref logits - lower means closer to Q8 quality. If handoff KLD is lower than target KLD, the Q8 KV cache is helping. We compute KLD not on some pre-defined text but on tokens generated by reference model.

Tested on three model families from the Qwen 3.5 series and one from Gemma 4, each with Q8 as reference:

- Qwen 3.5-2B - dense, 2.8B parameters

- Qwen 3.5-35B-A3B - MoE, 35B total / 3B active

- Qwen 3.5-397B-A17B - MoE, 397B total / 17B active

- Gemma 4-31B - dense, 31B parameters

14 test prompts: 8 short code tasks (~200-750 tokens), 4 medium-size code reviews (~1800-2200 tokens) and 2 large code reviews (~7800-7900 tokens). All inference runs use llama.cpp [1] library with recommended sampling arguments from the Unsloth guide [2]. The experiment code is available on GitHub [4].

p99 KLD

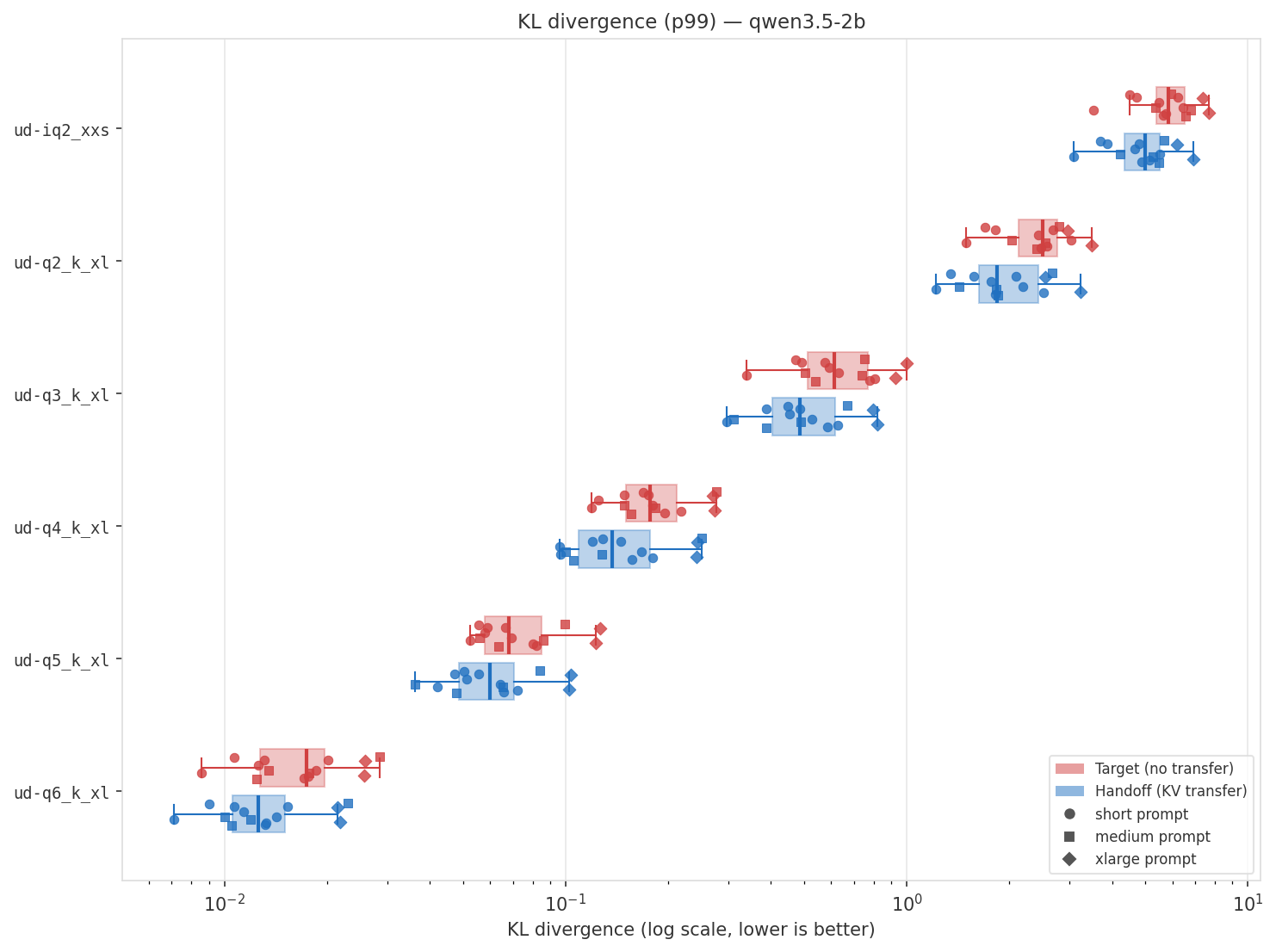

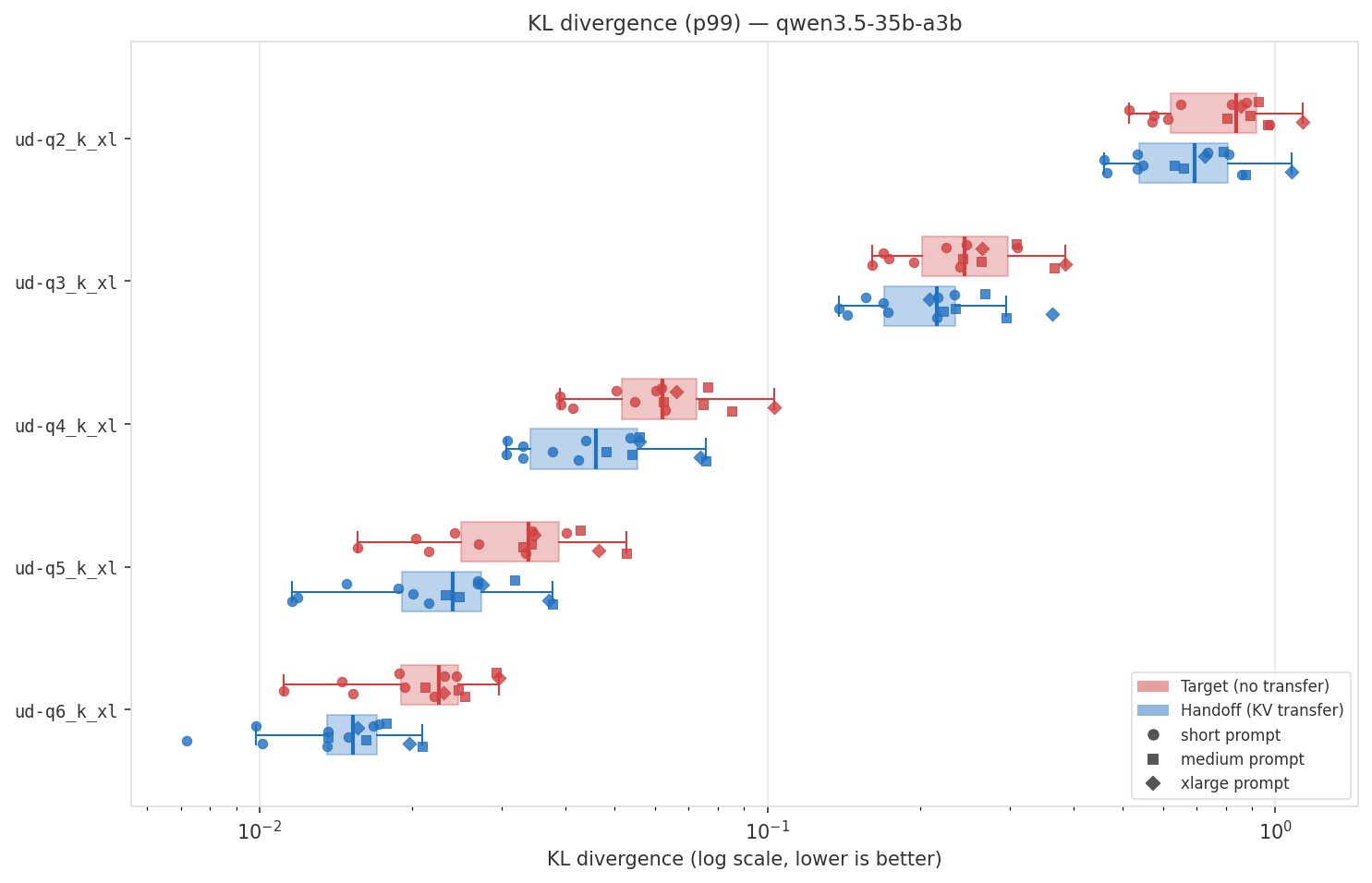

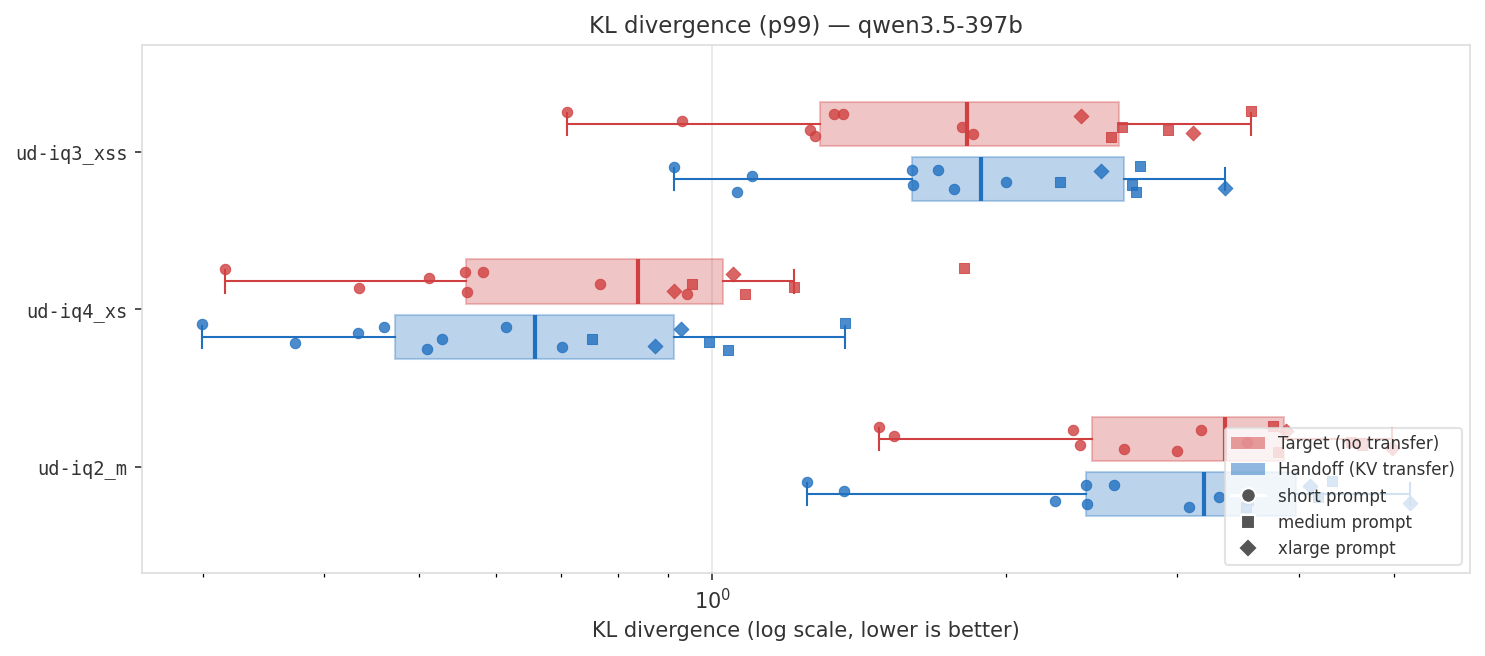

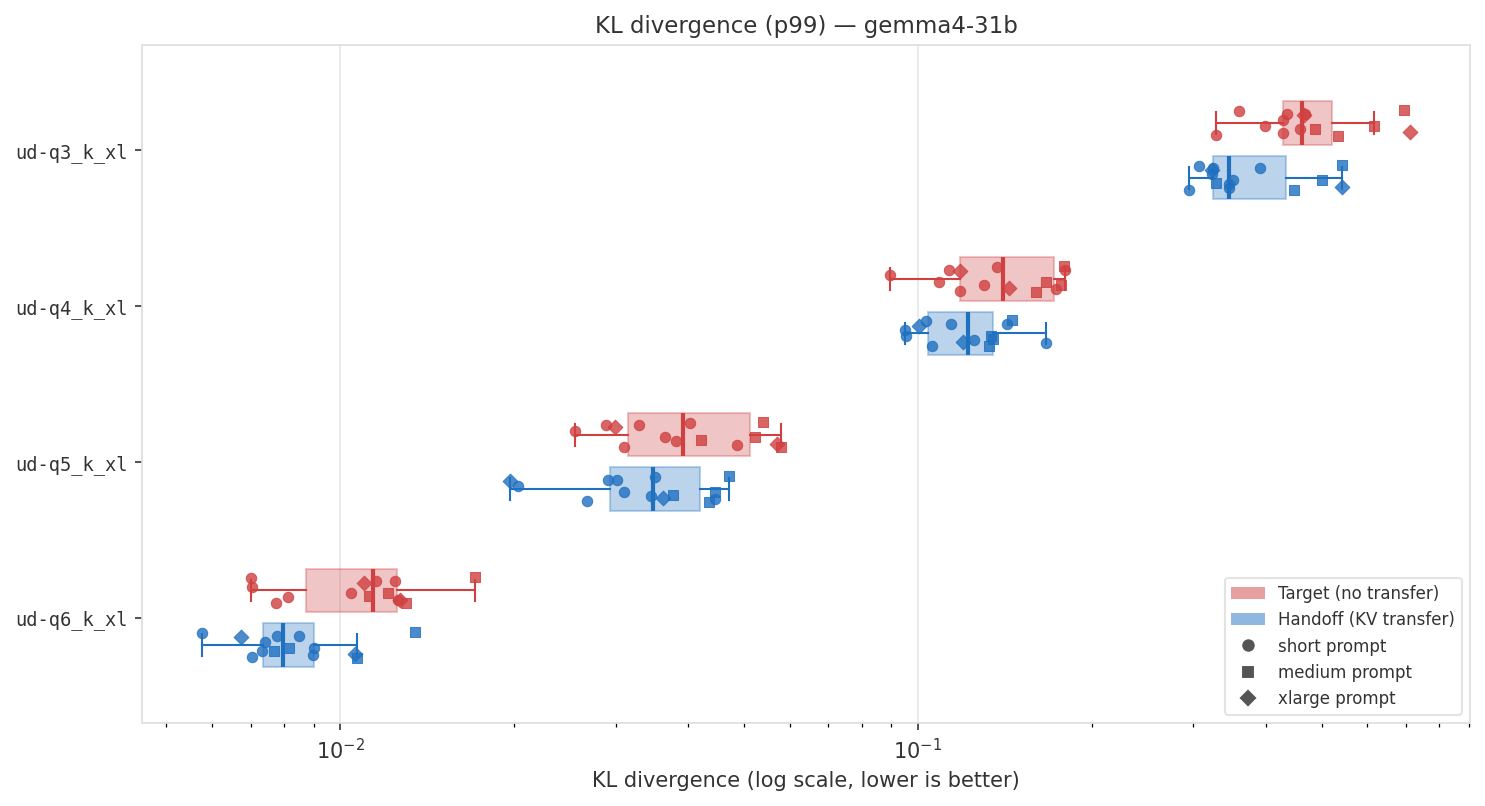

For each model family, the chart shows KL divergence per quantization level - box plots for distribution, with individual prompts overlaid (circles = short, squares = medium, diamonds = large). Red is baseline (no transfer), blue is with KV cache handoff. Lower is better.

Qwen 3.5-2B

Qwen 3.5-35B-A3B

Qwen 3.5-397B-A17B

Gemma 4-31B

As we can see, there’s some improvement for smaller models, but it’s not that significant - we don’t reach ‘next quant level’ performance. For large Qwen 3.5 397B model there’s not much improvement at all, but let’s test on higher quant (transfer from Q8 to Q6).

Decay study

Let’s also look at decay; as we move further away from prompt, and more tokens were processed by smaller, weaker model, we’d expect gradual decrease in KLD improvement - right after the prompt handoff should help more than 1000 tokens later.

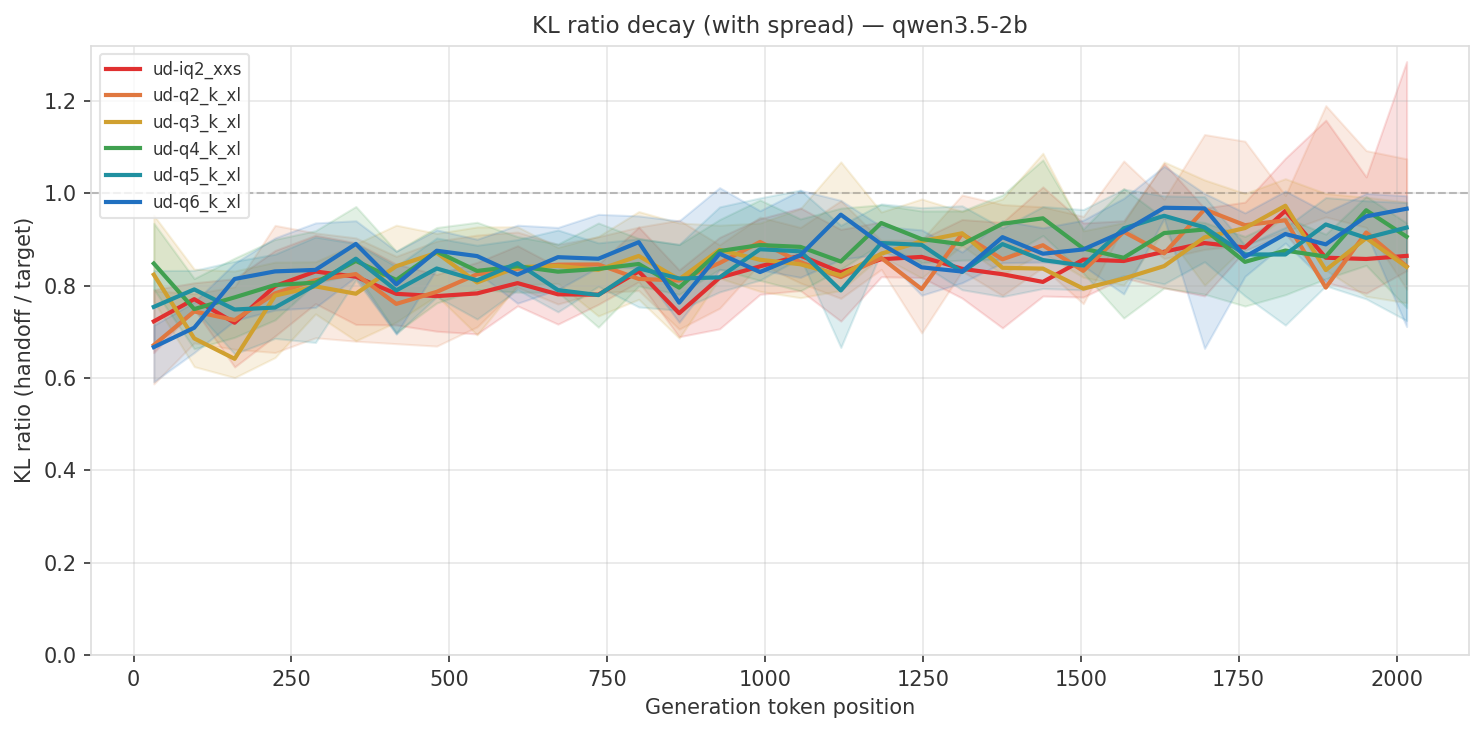

Qwen 3.5-2B

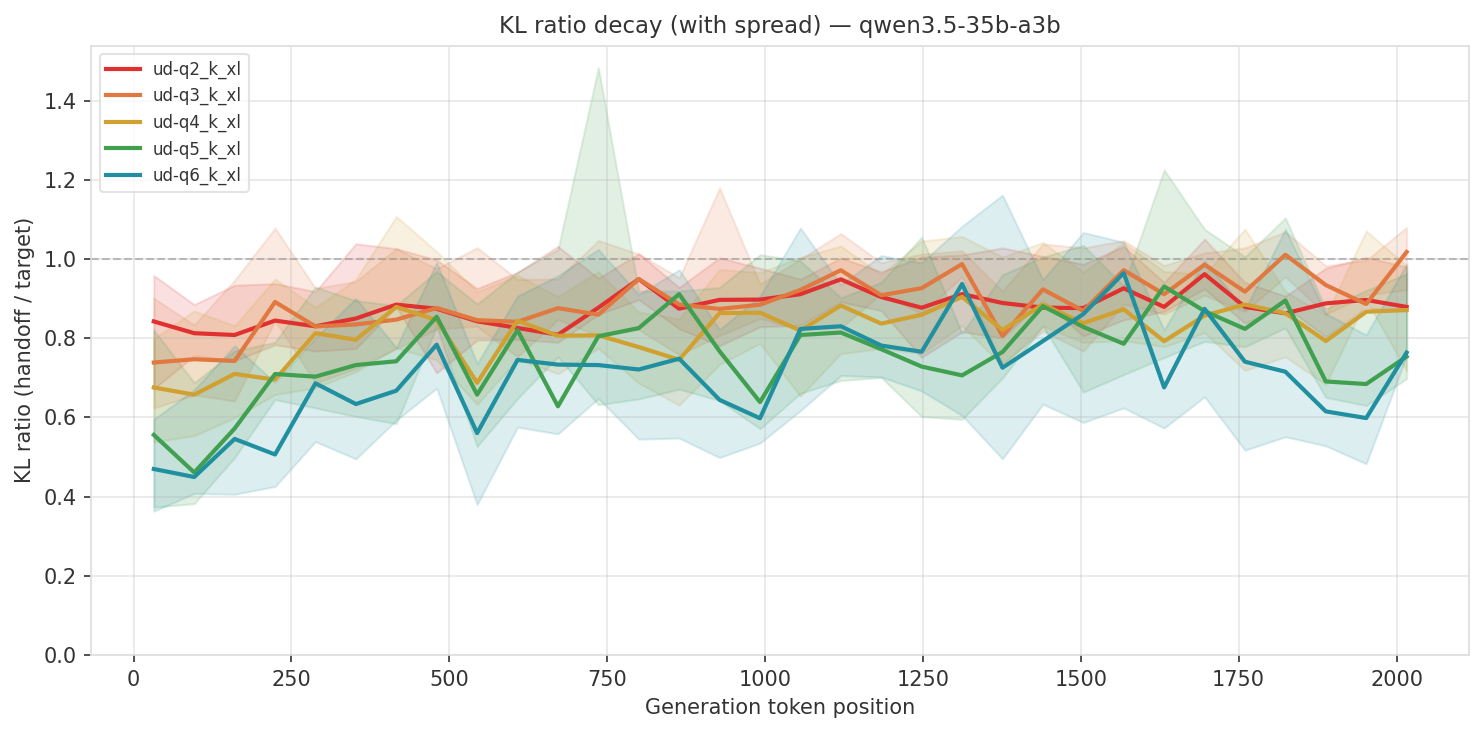

Qwen 3.5-35B-A3B

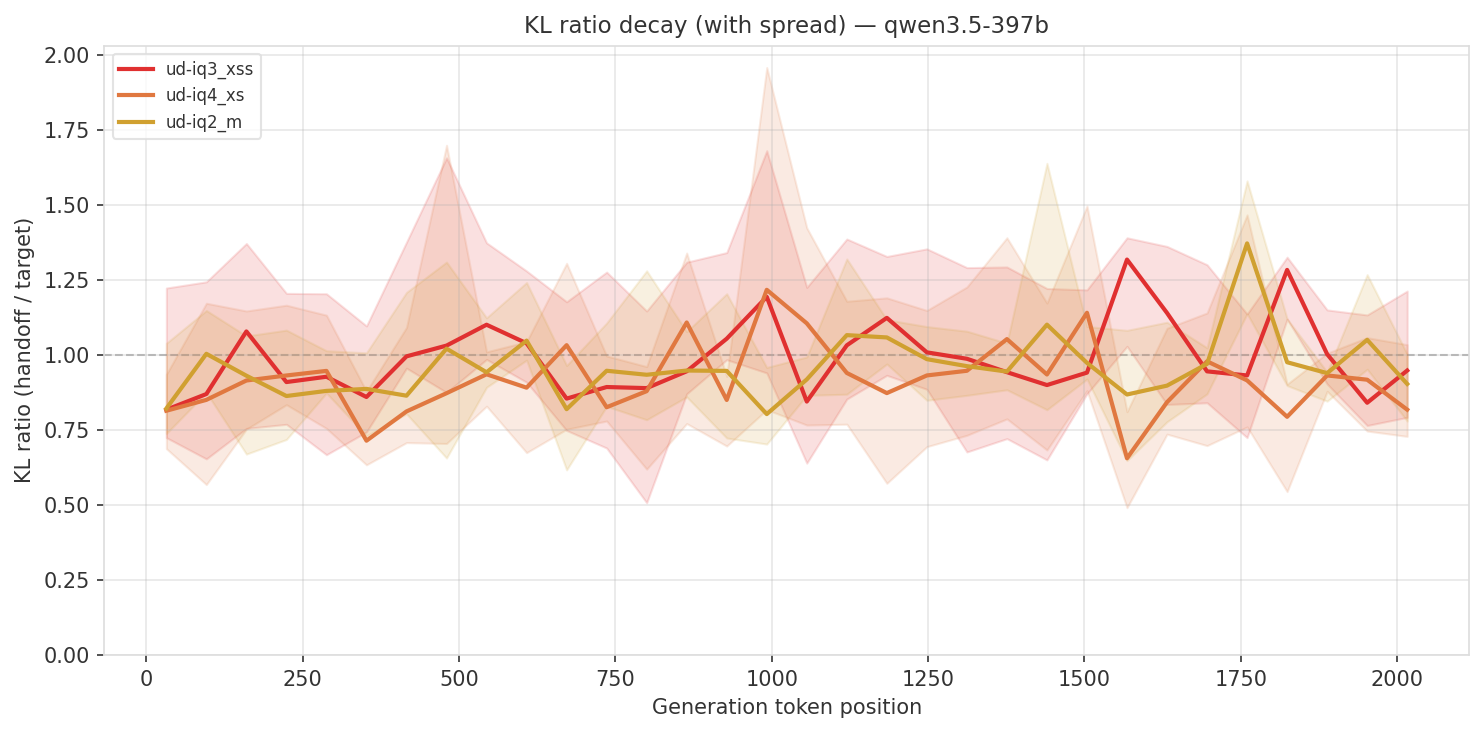

Qwen 3.5-397B-A17B

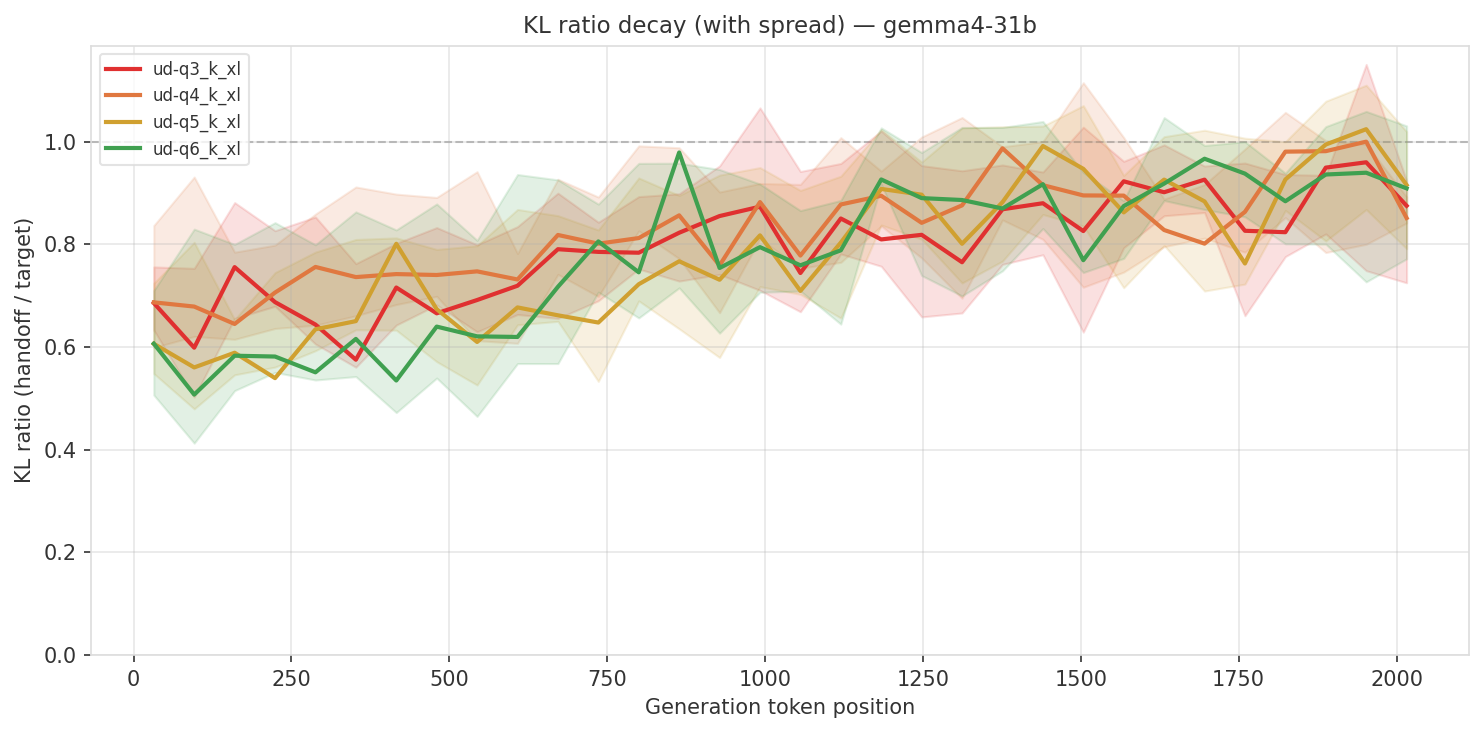

Gemma 4-31B

We can indeed see this pattern for medium sized models.

Results

Looking at the results, we can see that the impact is different based on model, starts pretty strong for some and decays as we reach ~2k tokens. It is possible that impact will be more pronounced with longer prompts. The next relevant tests to explore:

- Run higher quant for Qwen 3.5-397B;

- Run on longer prompts, 20-30k+ tokens;

- Run a real quality benchmark for some selected configuration, not just KLD, as it doesn’t show the complete picture [3].

References

- llama.cpp - C/C++ LLM inference library

- Unsloth Qwen 3.5 Guide - recommended quantization arguments

- Qwen 3.5 GGUF Benchmarks - quality benchmarks by quantization level

- kv-transfer - experiment code